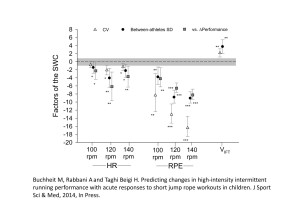

This paper shows how the determination of the SWC affects the interpretation of the magnitude of the changes in monitoring variables

Buchheit M, Rabbani A and Taghi Beigi H. Predicting changes in high-intensity intermittent running performance with acute responses to short jump rope workouts in children. J Sport Sci & Med, 2014, In Press.

The aims of the present study were to 1) examine whether individual HR and RPE responses to a jump rope workout could be used to predict changes in high-intensity intermittent running performance in young athletes, and 2) examine the effect of using different methods to determine a smallest worthwhile change (SWC) on the interpretation of group-average and individual changes in the variables. Before and after an 8-week high-intensity training program, 13 children athletes (10.6±0.9 yr) performed a high-intensity running test (30-15 Intermittent Fitness Test, VIFT) and three jump rope workouts, where HR and RPE were collected. The SWC was defined as either 1/5th of the between-subjects standard deviation or the variable typical error (CV). After training, the large ≈9% improvement in VIFT was very likely, irrespective of the SWC. Standardized changes were greater for RPE (very likely-to-almost certain, ~30-60% changes, ~4-16 times > SWC) than for HR (likely-to-very likely, ~2-6% changes, ~1- 6 times >SWC) responses. Using the CV as the SWC lead to the smallest and greater changes for HR and RPE, respectively. The predictive value for individual performance changes tended to be better for HR (74-92%) than RPE (69%), and greater when using the CV as the SWC. The predictive value for no-performance change was low for both measures (<26%). Substantial decreases in HR and RPE responses to short jump rope workouts can predict substantial improvements in high-intensity running performance at the individual level. Using the CV of test measures as the SWC might be the better option.

Key words: submaximal heart rate; rate of perceived exertion; OMNI scale; 30-15 Intermittent Fitness Test; progressive statistics.

Hopkins – How to Interpret Changes in an Athletic Performance Test Hopkins – Progressive statistics in sports… Batterham – Making Meaningful Inferences about magnitudes

Hopkins – How to Interpret Changes in an Athletic Performance Test Hopkins – Progressive statistics in sports… Batterham – Making Meaningful Inferences about magnitudes